Amanda Askell is a resident philosopher at Anthropic, specializing in how to interact with AI models. She not only leads the design of Claude’s personality, alignment, and value mechanisms but has also summarized effective prompting techniques.

Imagine you have the latest super coffee machine. You press buttons multiple times but can’t brew the coffee you want. The issue isn’t the machine’s performance; it’s that you don’t know the correct instructions.

At Anthropic, there’s someone dedicated to communicating with this “super intelligent coffee machine”—not an engineer or programmer, but a philosopher, Amanda Askell.

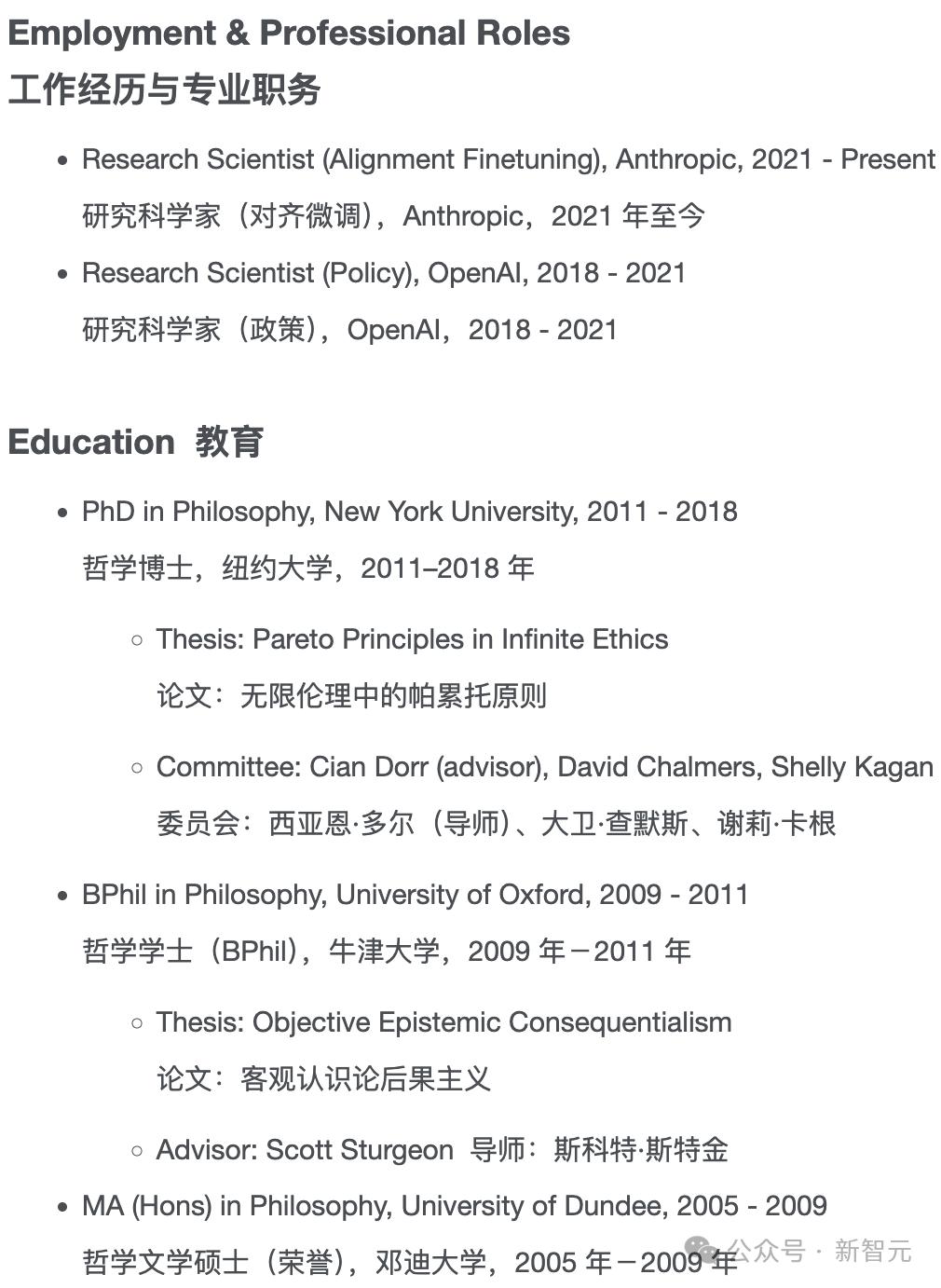

Amanda Askell is a trained philosopher who assists in managing Claude’s personality settings. She has a solid philosophical background, having studied at Oxford University and New York University, where she earned her PhD in philosophy in 2018.

After graduation, Askell worked as a research scientist focused on policy at OpenAI before joining Anthropic in 2021, where she has been a research scientist in alignment fine-tuning.

Askell is responsible for injecting certain personality traits into Claude while avoiding others. She has been recognized as one of the “100 Most Influential People in AI for 2024” for her work on Claude’s personality, alignment, and value mechanisms.

At Anthropic, Askell has earned the nickname “Claude whisperer” due to her research focus on how to communicate with Claude and optimize its outputs.

Using AI Effectively Requires a “Philosophical Key”

Philosophy is like the key to unlocking the complex machine that is AI. Recently, Askell shared her methods for creating effective AI prompts. She believes that prompt engineering requires clear expression, continuous experimentation, and a philosophical way of thinking.

According to Askell, a core ability of philosophy is to express thoughts clearly and accurately, which is key to maximizing AI’s value:

“It’s hard to summarize the intricacies; one key is to be willing to frequently interact with the model and carefully observe its outputs.”

Askell believes that good prompt authors should be “very experimental and daring to try,” but more important than trial and error is philosophical thinking.

“Philosophical thinking indeed helps in writing prompts; a significant part of my work is to explain as clearly as possible what my questions, concerns, or thoughts are to the model.”

The emphasis on clear expression in philosophical thinking not only helps optimize prompts but also aids in better understanding AI itself.

In the “Overview of Prompt Engineering” published by Anthropic, techniques including clear expression are highlighted:

- Be clear and direct;

- Provide examples (multishot/few-shot prompting) using multiple examples to illustrate expected outputs;

- If the task is complex, let the model think step-by-step (chain-of-thought) to improve accuracy;

- Give Claude a role (system prompt/role prompt) to set context, style, and task boundaries.

This means that when chatting with Claude, we can think of it as a knowledgeable, intelligent but often forgetful new employee who needs clear instructions.

In other words, it does not understand your norms, style, preferences, or ways of working. The more precisely you specify your needs, the better Claude’s responses will be.

Marc Andreessen, co-founder of Netscape and a prominent Silicon Valley entrepreneur and venture capitalist, recently stated that the power of AI lies in treating it as a “thinking partner”:

“The art of AI is in what questions you ask it.”

In the age of AI, asking a question is often more important than solving a problem. In other words, correctly formulating questions (prompt engineering) is key to efficiently solving problems. Humans can handle the questioning part (prompting), while the problem-solving part can be left to AI. This is why those who master prompting skills can find high-paying jobs, with median salaries for prompt engineers reaching $150,000 according to levels.fyi.

AI is Not “Someone”; Stop Asking It “What Do You Think?”

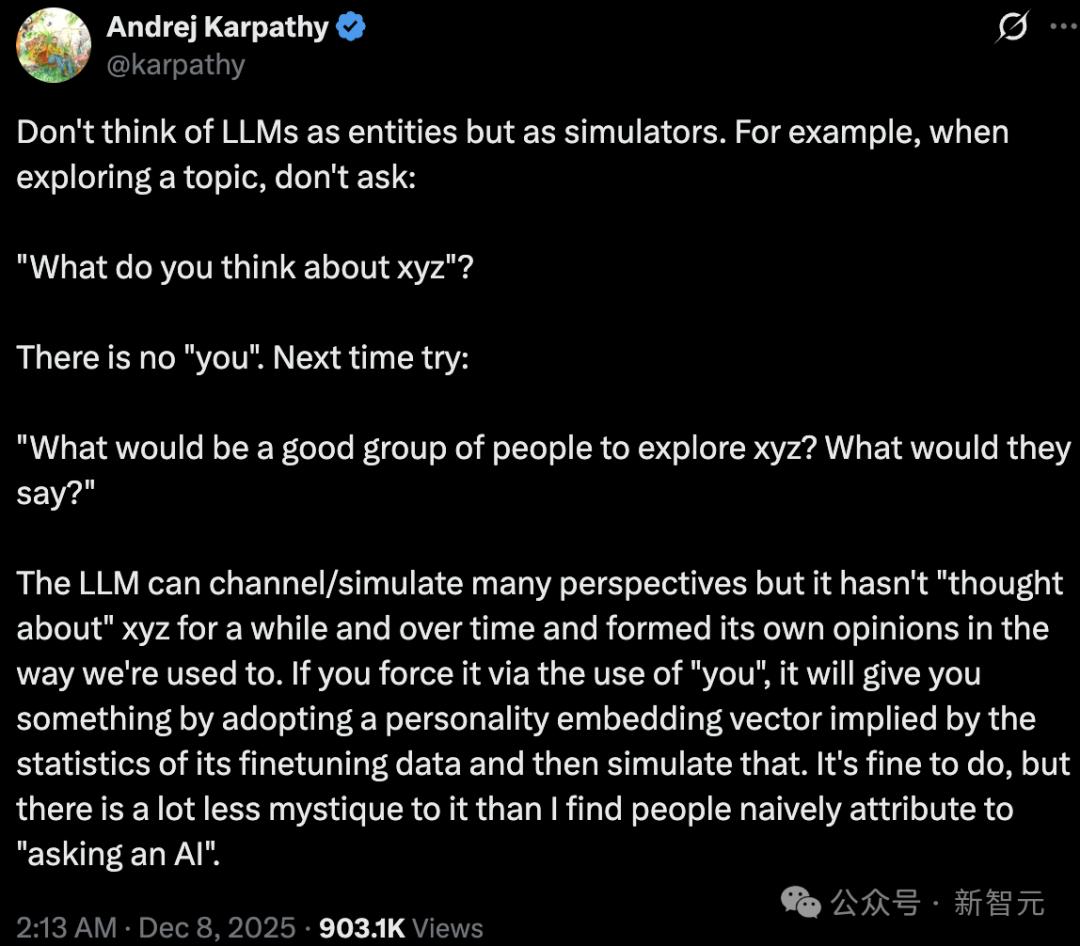

Recently, Andrej Karpathy expressed his views on prompting in a tweet. He suggested that people should not treat large models as an “entity” but rather as a “simulator.” For instance, when exploring a topic, don’t ask it what it thinks about xyz (a specific issue); instead, ask:

“If we were to discuss xyz, what roles/groups would be appropriate? What would they say?”

Karpathy believes that large models can switch and simulate many different perspectives, but they do not ponder xyz like humans do and gradually form their own opinions. Therefore, if you ask it using “you,” it will automatically apply some implicit “personality embedding vector” based on statistical features from the fine-tuning data and respond in that persona.

Karpathy’s explanation somewhat demystifies the notion of “asking an AI a question.”

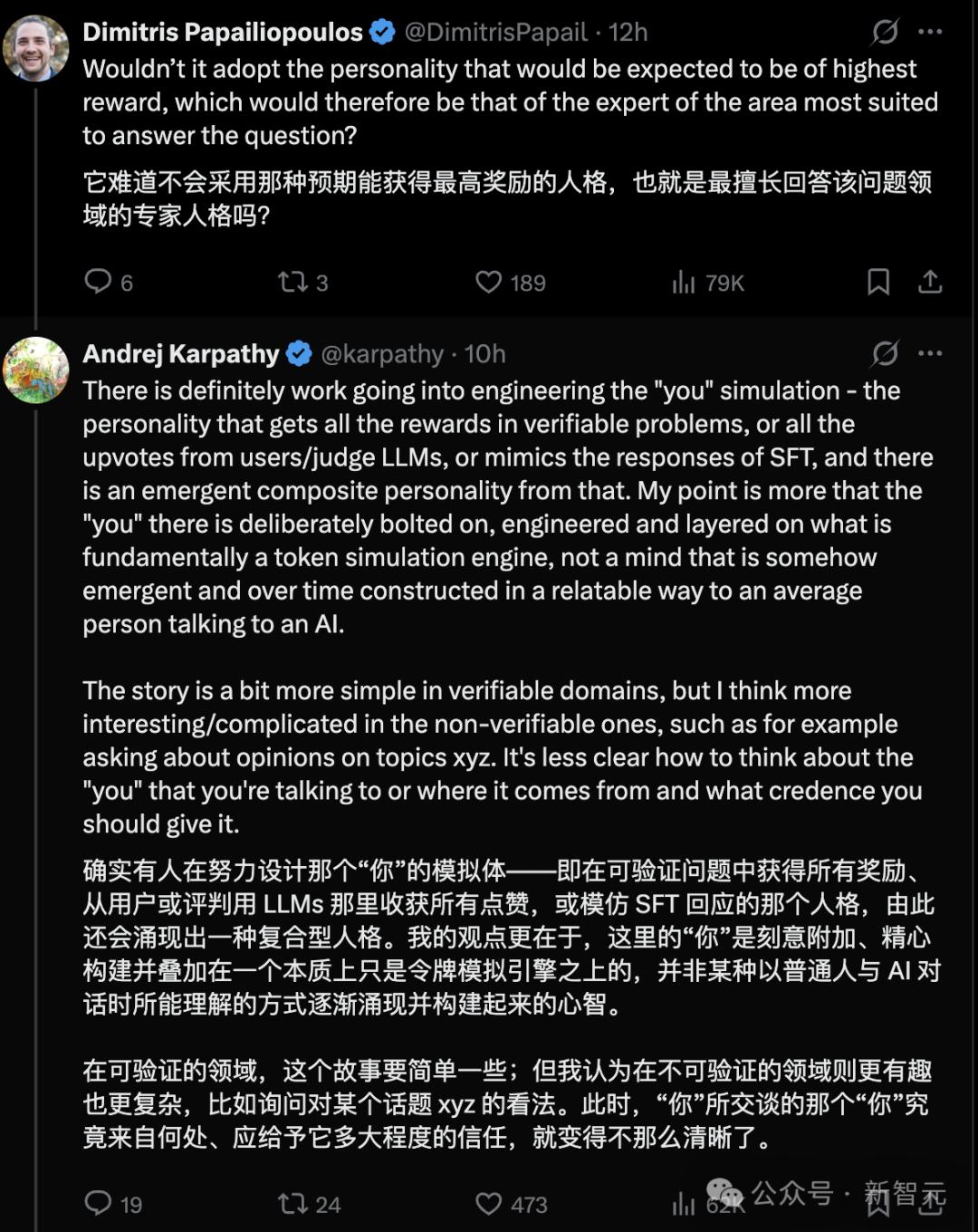

In response to Karpathy’s viewpoint, a user named Dimitris mentioned whether the model would automatically “play” the most capable expert persona to answer questions. Karpathy confirmed that this phenomenon does exist; in certain tasks, a “personality” may indeed be engineered, such as having the model mimic experts or achieve high scores through a reward model, imitating the style preferred by users.

This can lead to a “composite personality,” but this personality is a result of deliberate engineering rather than a naturally formed human mind. Thus, AI is fundamentally a token prediction machine. The so-called “personality” of the model is merely an “exoskeleton” formed through training, human constraints, and system instructions.

Askell also mentioned similar views. Although Claude has a “human-like quality,” it lacks emotions, memory, or self-awareness. Therefore, any “personality” it displays is merely the result of complex language processing, not an embodiment of inner life.

You Think AI is “Understanding the World”; It Might Just Be “Changing Channels”

Developing AI models sometimes feels like playing a whack-a-mole game. Just when you fix an error in the model’s response to one question, it starts making mistakes on another. The plethora of issues resembles moles popping out of holes.

Researchers at OpenAI and other institutions refer to this phenomenon as the “split-brain problem”: a slight change in the way questions are posed can lead to completely different answers from the model.

The “split-brain problem” reflects a critical flaw in today’s large models: they do not form an understanding of how the world works step by step like humans do. Some experts argue that they struggle to generalize well and find it difficult to handle tasks outside their training data.

This raises a question: is the investment of hundreds of billions of dollars into labs like OpenAI and Anthropic to train models capable of making new discoveries in fields like medicine and mathematics truly effective?

The “split-brain problem” typically arises in the later stages of model development, specifically during the post-training phase. At this stage, models are fed carefully curated data, such as knowledge from specific fields like medicine or law, or learn how to respond better to users.

For example, a model may be trained on a dataset of math problems to answer math questions more accurately. It may also be trained on another dataset to enhance its tone, personality, and format when responding.

However, this can sometimes lead the model to inadvertently learn to “answer by scene,” deciding how to respond based on the scene it “thinks” it is encountering:

Is it a clear math problem, or is it in a more generalized Q&A scenario it frequently sees in another training dataset?

If a user poses a math question in a formal proof style, the model usually gets it right. But if the user asks in a more casual tone, it might mistakenly think it is in a scenario where it is rewarded for “friendly expression and nice formatting.”

Thus, it may sacrifice accuracy for these additional attributes, such as producing a well-formatted answer, even including emojis.

In other words, when answering questions, the model also “tailors its response to the audience”:

If it perceives the user is asking a “low-level” question, it gives a “low-level” answer; if it thinks the user is asking a “high-level” question, it presents a “high-level” answer.

This sensitivity to the format of prompts can lead to subtle differences that shouldn’t exist. For instance, whether a prompt uses a dash or a colon can impact the quality of the model’s response.

The “split-brain problem” highlights the challenges and nuances of training models, especially ensuring that the combination of training data is just right. It also explains why many AI companies are willing to invest billions of dollars in hiring experts in fields like mathematics, programming, and law to generate training data, preventing their models from making basic errors in front of professional users.

The emergence of the “split-brain problem” also lowers expectations regarding AI’s ability to automate multiple industries, from investment banking to software development.

While humans, like AI, can also misunderstand questions, the purpose of AI is to compensate for these human shortcomings, not to amplify them through the “split-brain problem.”

Therefore, it is essential to have human experts with philosophical thinking and expertise in a specific field to create the “manual” for training and using large models through prompt engineering. People use these “manuals” to communicate with large models to address the “split-brain problem.”

Moreover, when large models exhibit “human-like” characteristics, avoiding the illusion of treating them as “people” can help us better leverage their value and reduce machine hallucinations. This indeed requires philosophical training to ensure that dialogues with AI are clear and logical.

From this perspective, for most people, the ability to use AI effectively depends not on their AI expertise but on their philosophical thinking skills.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.